TL;DR Summary:

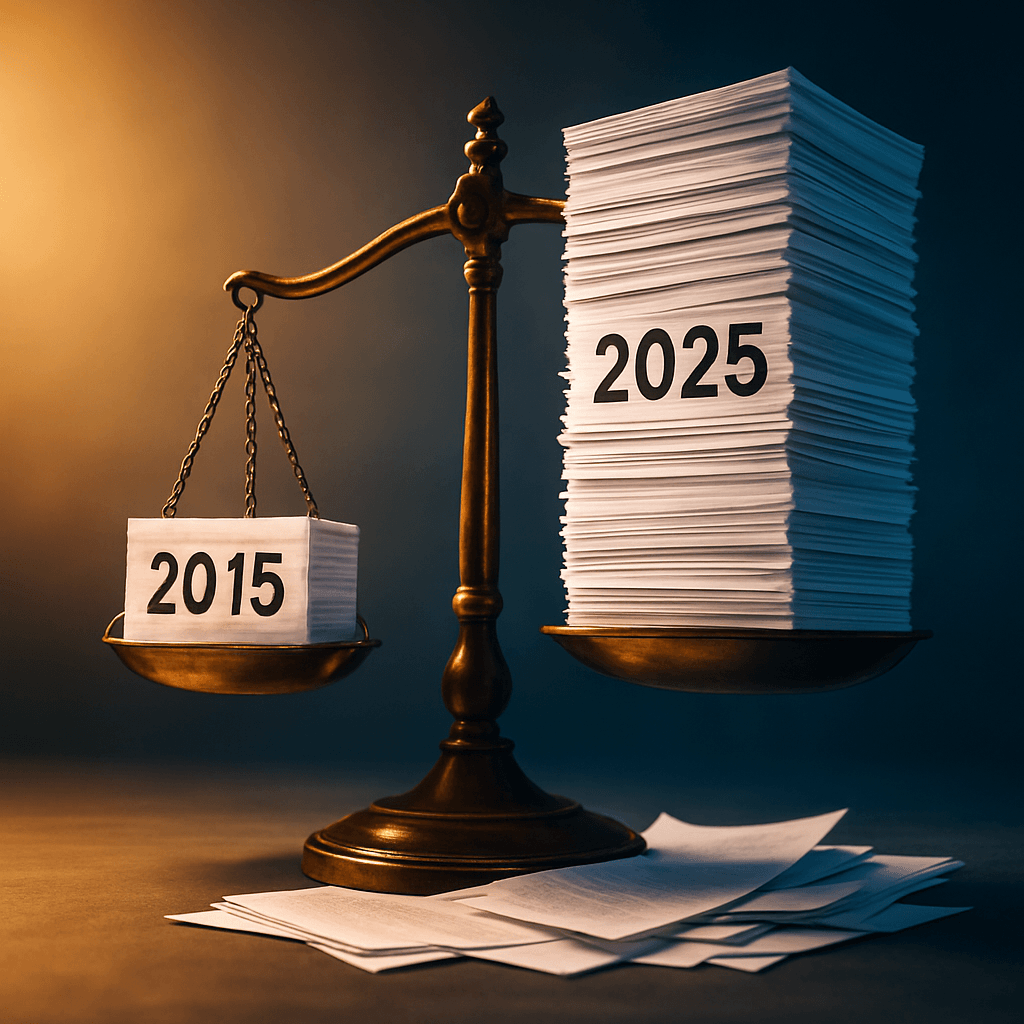

Sitemap Shock: Google's John Mueller reveals technically perfect XML sitemaps fail if content lacks appeal.Content Trumps Tech: Google ignores sitemaps without new, important content worth indexing.Quality Fixes Needed: Prioritize fresh, valuable content over technical tweaks for crawling success.Why Google May Not Use Your Sitemap Despite Perfect Technical Setup

Google’s John Mueller recently dropped a bombshell about sitemaps that explains why your technically perfect XML sitemap might still fail in Search Console.

A frustrated website owner posted on Reddit about a confusing sitemap problem. Their sitemap returned perfect 200 status codes. The XML structure was valid. Server logs showed Googlebot successfully fetched the sitemap multiple times.

Yet Search Console kept showing “Couldn’t fetch” errors and “Sitemap could not be read” messages. This person checked everything twice. They manually submitted individual pages, which Google indexed successfully. But the sitemap URLs remained untouched.

The Real Reason Why Google May Not Use a Sitemap

Mueller’s response revealed the hidden truth about sitemap processing:

“One part of sitemaps is that Google has to be keen on indexing more content from the site. If Google’s not convinced that there’s new and important content to index, it won’t use the sitemap.”

This statement changes everything we thought we knew about sitemaps. Technical perfection means nothing if Google doesn’t want your content.

Google Evaluates Content Quality Before Processing Sitemaps

Mueller didn’t explicitly mention “site quality,” but his answer implies it strongly. Google must be “keen on indexing more content” that is “new and important.”

This suggests two potential problems with sites experiencing sitemap issues:

The site doesn’t produce much fresh content. Google sees no reason to check for updates regularly.

The existing content lacks importance or value. Google prioritizes crawling resources for sites with higher-quality content.

When facing persistent sitemap errors despite technical validity, Labrika can help identify the content quality signals Google uses to determine crawling priorities.

What Makes Content “Important” to Google

The word “important” covers a broad range of content factors. Your content might be missing elements that help users understand topics or make decisions.

Sometimes highly-ranked sites lack specific content types. You might need better images, step-by-step guides, videos, or other formats. The missing piece could be anything.

Content might also be trivial because it’s thin, duplicate, or offers nothing unique. Labrika reveals these quality gaps by analyzing on-page factors, content uniqueness, and technical elements that influence Google’s perception of page importance.

Technical Success Doesn’t Guarantee Crawling

This revelation explains why many SEO professionals feel confused about sitemap performance. You can have perfect technical implementation but still fail at the content level.

Google treats sitemaps as suggestions, not commands. The search engine decides whether your suggestions deserve attention based on content quality signals.

Your sitemap might be technically flawless, but Google won’t waste crawling resources on sites that don’t meet quality thresholds.

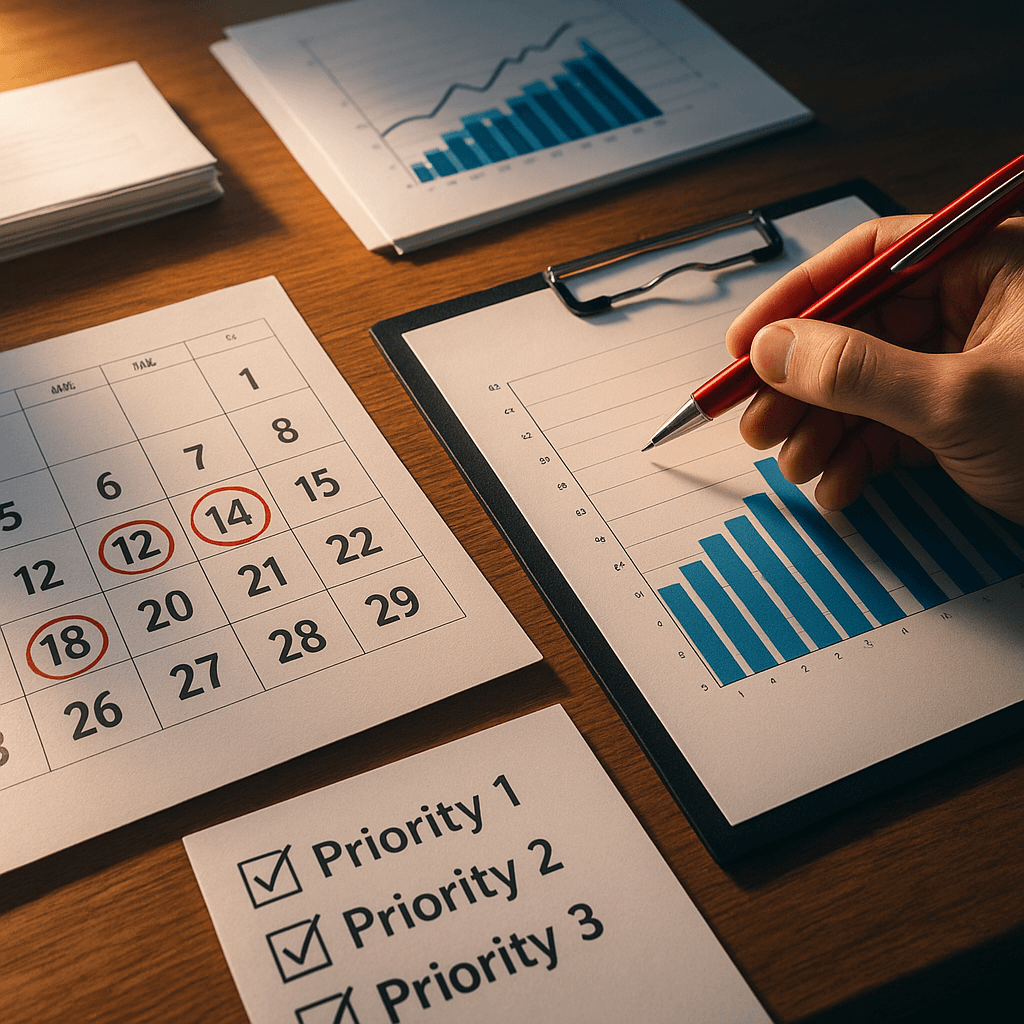

Beyond Technical Fixes for Sitemap Problems

When troubleshooting sitemap issues, most people focus on technical problems first. They check response codes, validate XML syntax, and verify robots.txt settings.

But Mueller’s explanation shows that content evaluation happens before technical processing. Google decides whether to use your sitemap based on content quality, not technical perfection.

Think like your visitors when evaluating content importance. What would help them most? What information do they need to solve problems or make decisions?

The answer often lies in comprehensive content analysis that examines uniqueness, depth, and competitive gaps across your sitemap URLs.

Are you spending time fixing technical sitemap issues when the real problem lies in how Google perceives your content quality? Tools like Labrika can help diagnose whether content quality signals are preventing Google from processing your otherwise perfect sitemap.