TL;DR Summary:

AI Coding Flaw Exposed: Major vulnerability in vibe coding platforms allows unauthorized access to private enterprise apps via unprotected API endpoints, bypassing SSO and access controls.Shared Infrastructure Danger: Thousands of applications on shared systems face simultaneous risks, turning single flaws into widespread ecosystem threats.Security Overhaul Needed: Organizations must audit APIs, enforce zero-trust, and assess vendors to counter AI development's hidden dangers.The Hidden Security Risks of AI-Powered Application Development

A significant security vulnerability recently discovered in a major AI coding platform has exposed concerning weaknesses in how artificial intelligence is being used to generate applications. This flaw, which affected countless private enterprise applications, reveals deeper issues about security in AI-driven development environments.

Understanding AI-Powered “Vibe Coding” Platforms

The concept of vibe coding has gained massive traction among developers and organizations looking to streamline their application development process. These platforms allow users to create full applications simply by describing what they want in plain text, with AI handling the technical implementation. While this democratizes app development, it introduces a new layer of complexity to application security.

The compromised platform, recently acquired by a prominent website builder, operates on a shared infrastructure model where multiple applications rely on the same foundational systems. This architectural approach, while efficient, creates potential single points of failure that can affect entire ecosystems of applications simultaneously.

Anatomy of the Security Vulnerability

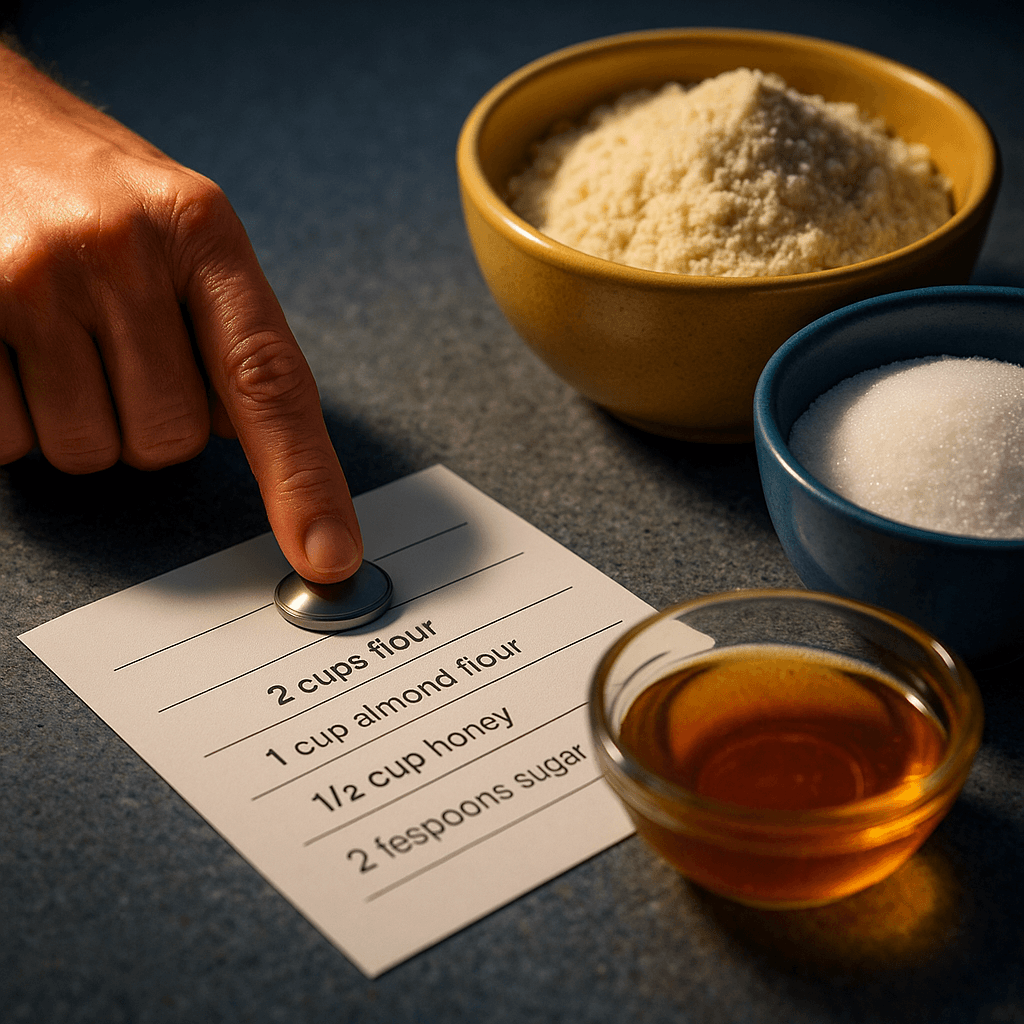

The discovered vulnerability centered on two previously unknown API endpoints that managed user registration and email verification processes. These endpoints lacked proper authentication controls, meaning anyone with basic knowledge of an application’s ID could create verified accounts within private enterprise applications.

This security gap effectively nullified multiple layers of protection, including:

- Single Sign-On (SSO) systems

- Enterprise authentication protocols

- Access control mechanisms

- User verification processes

The implications were severe – unauthorized users could potentially access sensitive enterprise data, including personal information and confidential HR records, simply by exploiting these unprotected endpoints.

The Ripple Effect of Shared Infrastructure

What makes this vulnerability particularly concerning is its potential impact across entire application ecosystems. Unlike traditional development where each application maintains its own codebase and infrastructure, vibe coding platforms create a shared environment where multiple applications inherit the same underlying architecture.

This shared model means:

- Security flaws can affect thousands of applications simultaneously

- Vulnerabilities can spread rapidly across enterprise systems

- A single weak point can compromise entire networks of applications

- Traditional isolation-based security measures become less effective

Swift Response and Security Implications

The platform operator’s quick 24-hour response to patch the vulnerability demonstrates the industry’s growing awareness of security risks in AI-powered development tools. While no malicious exploitation was detected, the incident highlights the critical importance of proactive security measures in emerging technology spaces.

Rethinking Security in AI-Driven Development

Organizations utilizing AI-powered development platforms must adopt new approaches to security:

Infrastructure Assessment

- Regular auditing of shared platform components

- Continuous monitoring of API endpoints

- Implementation of anomaly detection systems

- Regular security posture evaluations

Access Control Management

- Enhanced authentication layering

- Strict API exposure limitations

- Regular access control reviews

- Implementation of zero-trust principles

Risk Management

- Vendor security assessment protocols

- Third-party security audits

- Incident response planning

- Regular security training updates

The Future of AI Application Security

As artificial intelligence continues reshaping application development, security practices must evolve accordingly. The traditional model of isolated security measures no longer suffices in an environment where applications share common infrastructure and inherit collective vulnerabilities.

Organizations need to balance the efficiency gains of AI-powered development with robust security measures that account for:

- Shared infrastructure risks

- Automated code generation vulnerabilities

- API security management

- Authentication protocol effectiveness

Moving Forward with AI-Driven Development

The incident serves as a crucial reminder that while AI can accelerate development processes, it shouldn’t come at the expense of security. Organizations must maintain vigilant oversight of their AI-powered development tools and implement comprehensive security measures that address the unique challenges these platforms present.

As we continue embracing AI-driven development methods, one question remains paramount: How can we create a framework that maintains the speed and accessibility of AI-powered development while ensuring ironclad security across shared infrastructure systems?