TL;DR Summary:

Key finding: AI chatbots frequently misreport news—around 45–51% of assistant responses had problems in the BBC/EBU study, with most answers (about 91% in an earlier round) containing some issue such as errors, altered quotes, or missing context. Types of errors: Common failures included factual mistakes (wrong dates, statistics, or claims), altered or fabricated quotes, and inability to reliably distinguish fact from opinion or provide necessary context. Real-world impact: These inaccuracies can spread misinformation with serious consequences (e.g., incorrect health guidance, wrong claims about public figures), undermining public trust and causing potential harm. Recommended response: Use AI as an aid, not a replacement for human journalism—require human fact-checking, clear sourcing and attribution, contextualization of facts vs. opinion, and industry standards/fact‑checking mechanisms to improve accuracy.The Accuracy Crisis: AI Chatbots Struggle with News Reporting

In our rapidly evolving digital world, AI chatbots have emerged as powerful tools for automating various tasks, including content generation and news reporting. However, a recent study by the BBC has raised significant concerns about the accuracy and reliability of these AI assistants when it comes to reporting news.

The BBC Study: A Closer Look

The BBC conducted an extensive study involving some of the most popular AI chatbots, including ChatGPT, Microsoft’s Copilot, Google’s Gemini, and Perplexity. The researchers submitted 100 questions about current news to these AI assistants and asked them to cite BBC articles as sources. The results were alarming.

A staggering 51% of the responses from these AI chatbots had significant problems, with 91% of the answers containing some form of issue. Here are some key findings:

- Factual Errors: 19% of the responses that cited BBC content contained factual errors, such as incorrect dates, statistics, and statements.

- Altered Quotes: 13% of the quotes from BBC articles were either altered or completely fabricated.

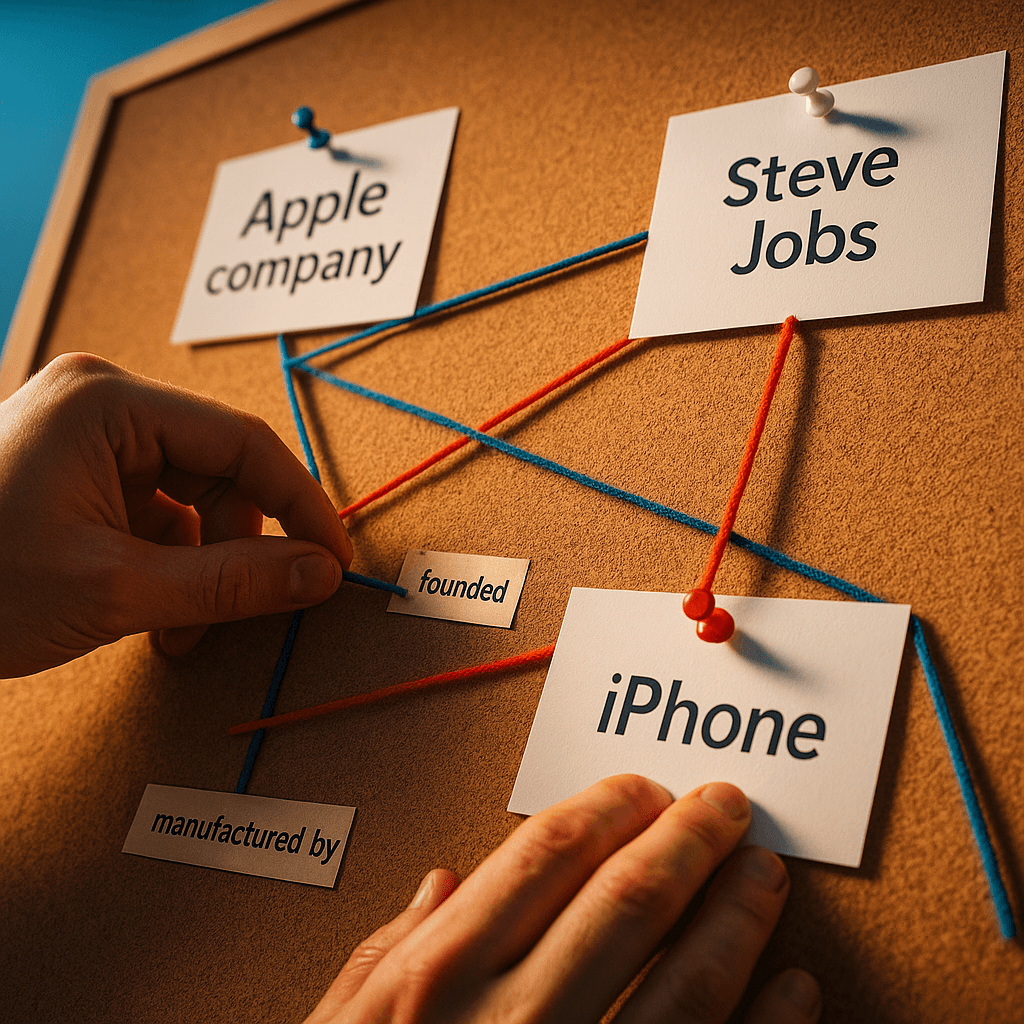

- Context and Opinion: AI assistants often struggled to distinguish between facts and opinions and failed to provide the necessary context for the information they presented.

Real-World Consequences

These inaccuracies are not just minor oversights; they can have serious real-world consequences. For instance, Google’s Gemini incorrectly stated that the NHS advises people not to start vaping and recommends other methods for quitting smoking, which is simply not true. The NHS actually recommends vaping as a safer alternative to smoking for those trying to quit.

Other examples include errors about TV presenter Dr. Michael Mosley’s death and incorrect statements about political leaders still being in office after they had stepped down or been replaced. These mistakes highlight the potential for AI chatbots to spread misinformation, which can lead to confusion, mistrust, and even harm.

The Necessity of Accurate News Reporting

Accuracy is the cornerstone of trustworthy news reporting. When news is distorted or misleading, it can erode public trust and create a society where facts are no longer a shared understanding. This is particularly critical in an era where misinformation can spread rapidly through digital platforms.

The BBC study underscores the importance of ensuring that news, whether delivered through traditional media or AI assistants, is accurate and reliable. It is essential for maintaining a well-informed public and for the functioning of a healthy society.

Human Oversight: The Key to Accuracy

While AI can simplify content creation and provide quick answers, human review is crucial for spotting mistakes and ensuring the quality of the information. Marketers and content creators should be aware that relying solely on AI for news reporting can harm their brand’s reputation and credibility.

Human-centric journalism, where human editors and fact-checkers are involved in the process, is still the gold standard for accuracy. AI tools can be powerful aids, but they should not replace the critical eye and judgment of human professionals.

Best Practices for Using AI in Content Creation

For those considering using AI tools in their content strategy, here are some best practices to keep in mind:

- Accuracy Matters: Ensure that the content generated by AI is accurate. This may involve manual checks and fact-checking.

- Human Review: Always have human reviewers go through AI-generated content to catch any errors or inaccuracies.

- Context is Key: Be aware that AI often struggles with providing context and distinguishing between facts and opinions. Ensure that the content is presented in a way that provides clear context.

- Proper Attribution: When using AI to summarize or reference sources, make sure to credit and link to the correct pages to maintain transparency.

The Path Forward: Ensuring Accuracy in AI-Assisted News Reporting

As AI continues to evolve and become more integrated into our daily lives, it is crucial to address the accuracy issues highlighted by the BBC study. The tech and media sectors must work together to ensure that AI tools are developed and used in a way that prioritizes accuracy and trustworthiness.

This collaboration could involve setting standards for AI-generated content, implementing robust fact-checking mechanisms, and educating the public about the limitations and potential biases of AI assistants.

Striking the Right Balance

The BBC study serves as a wake-up call for the potential risks associated with relying on AI chatbots for news reporting. While AI has the potential to revolutionize content creation, it is clear that it is not yet ready to replace human judgment and accuracy.

As we move forward in this age of technological advancement, one question remains: How can we strike the right balance between harnessing the efficiency and innovation of AI while ensuring the accuracy and trustworthiness of the information we consume? The answer to this question will be crucial in shaping the future of news reporting and ensuring that the public remains well-informed and trusting of the information they receive.