TL;DR Summary:

Traffic Drop Fix: Website traffic fell 40% overnight due to overlooked technical issues, not content or penalties.Five-Layer Framework: Debug systematically through Crawl, Render, Index, Rank, and Click layers to pinpoint root causes efficiently.Quick Wins Proven: Real fixes like robots.txt tweaks restored 60% traffic in 72 hours for e-commerce sites.Your website traffic just dropped 40% overnight, and you’re staring at Search Console wondering what went wrong. Before you panic and start rewriting every page or hunting for mysterious penalties, there’s a smarter approach that can save you weeks of guesswork.

Most people attack SEO problems backward. They see rankings drop and immediately jump to content updates or link building. That’s like hearing your car make a weird noise and immediately replacing the engine instead of checking if you’re out of gas.

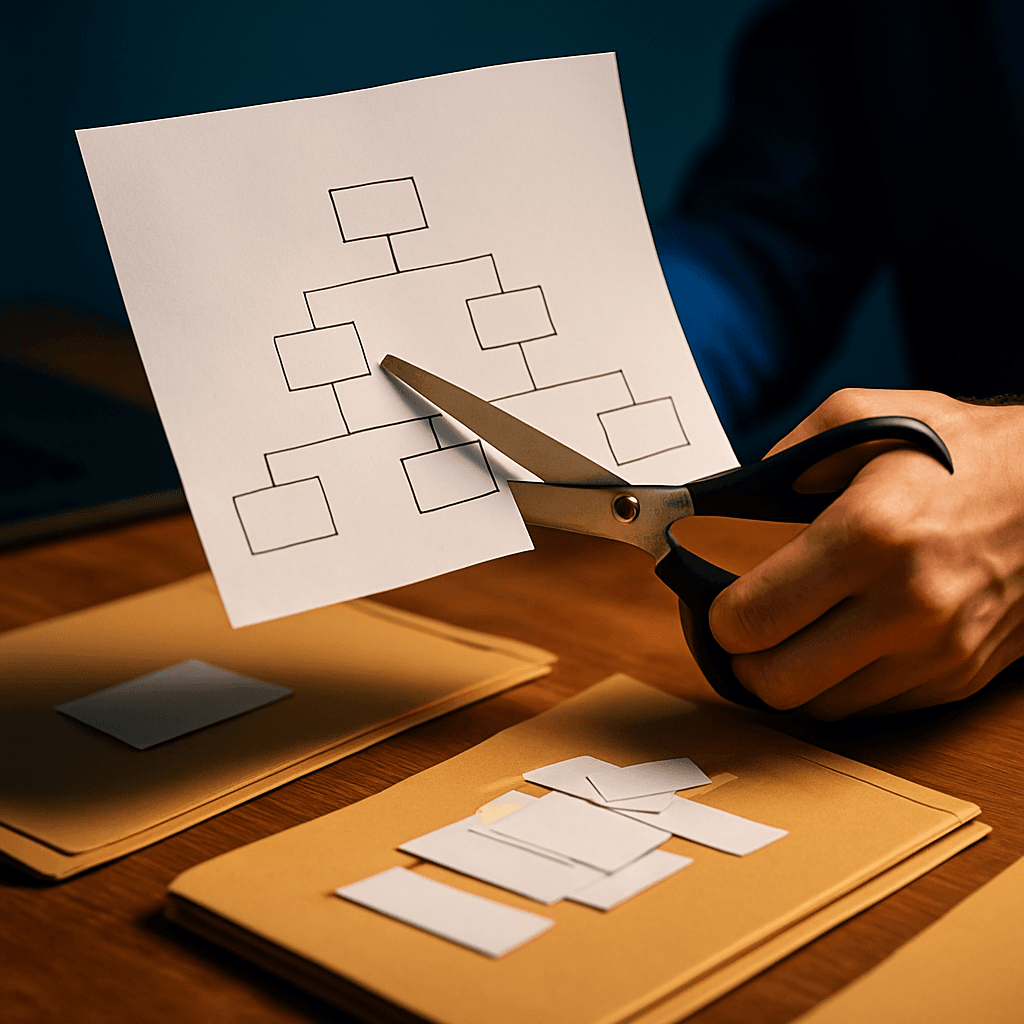

The Five-Layer SEO Debugging Framework

Professional SEO debugging follows a structured hierarchy: Crawl, Render, Index, Rank, and Click. Each layer builds on the previous one, so starting at the wrong level wastes time and resources. When you need to hire SEO debugging expert services, they’ll typically follow this exact methodology.

Crawl Issues: The Foundation Layer

Search engines can’t rank pages they can’t reach. Period. Start here before touching anything else.

Pull up Google Search Console and check your coverage report. Look for patterns in crawl errors—are they concentrated in specific sections or did they spike after a recent site update?

I’ve watched businesses lose thousands in revenue because someone accidentally blocked their entire product catalog with a single robots.txt line. One misplaced “Disallow: /products/” can tank an e-commerce site overnight.

Run a comprehensive crawl using tools like Screaming Frog or Sitebulb. Pay special attention to:

- 404 errors on important pages

- Server timeouts during peak traffic

- Blocked JavaScript and CSS files

- Orphaned pages with no internal links

JavaScript and CSS blocks are particularly sneaky. Google needs these resources to understand modern websites, but many sites accidentally block them, creating a gap between what users see and what search engines process.

Render Problems: The Hidden Visibility Killer

Even if Google can crawl your pages, rendering issues might prevent proper content analysis. This layer trips up many JavaScript-heavy websites.

Use Google’s Mobile-Friendly Test or URL Inspection tool to see exactly what Google renders. Compare this to what you see in your browser. Mismatches here explain many mysterious ranking drops.

Single-page applications and dynamic content loading create the biggest rendering headaches. If your content loads via JavaScript after the initial page load, Google might miss it entirely. Server-side rendering or pre-rendering critical content solves this problem.

Check your JavaScript bundle size using Chrome DevTools Coverage panel. Bloated JavaScript files slow rendering and hurt user experience. Tree shaking and code splitting can dramatically improve render times.

Rendering fixes alone often recover 20-30% of lost organic impressions on modern websites. Don’t assume your site renders correctly just because it looks fine to you.

Index Issues: Getting Your Content Recognized

Pages that can’t get indexed will never rank, no matter how great your content is. This layer reveals some of the most frustrating SEO problems.

Use Search Console’s URL Inspection tool to batch-check pages that should be indexed but aren’t appearing in search results. Look for patterns:

- Thin content pages getting filtered out

- Duplicate meta descriptions across category pages

- Canonical tag issues pointing to wrong URLs

- Core Web Vitals failures blocking indexation

Mobile-first indexing punishes sites with poor mobile performance or usability issues. A page that loads slowly on mobile might get crawled but never indexed.

Internal linking structure matters more than most people realize. Pages buried deep in your site architecture or completely orphaned often stay unindexed. Create clear pathways for both users and crawlers to reach important content.

Ranking Factors: Why Good Pages Don’t Rank

Once your pages are crawled, rendered, and indexed, ranking factors determine their search position. This layer requires the most nuanced analysis.

Compare your top-performing pages to recent losers. Look for differences in:

- Content depth and expertise signals

- Backlink profiles and anchor text diversity

- Page loading speed and user experience metrics

- Alignment with current search intent

Algorithm updates can dramatically shift ranking factors. A page that ranked well for “best running shoes” might lose visibility if Google starts prioritizing recent product reviews over older comparison guides.

Before making changes, write down your hypothesis. “Rankings dropped because our product pages lack recent customer reviews” gives you something specific to test and measure.

Many businesses hire SEO debugging expert consultants at this stage because ranking analysis requires deep technical knowledge combined with strategic thinking.

Click-Through Rate: The Final Performance Layer

High rankings mean nothing if users don’t click your results. This layer focuses on optimizing your search appearance.

Check Search Console’s Performance report for pages with high impressions but low click-through rates. These pages rank well but fail to attract clicks.

Title tags and meta descriptions act as your search result advertisement. Make them specific and compelling:

- Generic: “Running Shoes – SportStore”

- Specific: “Trail Running Shoes Tested by Marathon Runners – 2024 Guide”

Structured data markup can add rich snippets that make your results stand out. Product ratings, FAQ sections, and breadcrumb navigation in search results typically boost click-through rates by 20-30%.

Creating a Systematic Debugging Process

Document every hypothesis before you start digging. This prevents confirmation bias and keeps you focused on data rather than assumptions.

Create a debugging checklist that your team can follow consistently:

1. Export crawl errors from Search Console

2. Run site crawl and identify accessibility issues

3. Test rendering on mobile and desktop

4. Check indexation status for key pages

5. Analyze ranking changes against algorithm updates

6. Review click-through rates and meta tag performance

Cross-validate findings using multiple data sources. Search Console, Bing Webmaster Tools, and server log analysis often reveal different pieces of the puzzle.

For larger websites, assign different team members to each layer. Developers handle crawl and render issues, content teams manage indexation and ranking factors, while designers optimize click-through elements.

Real-World Debugging Success Stories

After a major site migration, one e-commerce site lost 60% of their organic traffic. The five-layer framework quickly identified blocked JavaScript files in robots.txt. One line change restored traffic within 72 hours.

Another case involved a SaaS company whose product pages stopped ranking. Rendering analysis revealed that pricing information was loading via JavaScript after Google’s render timeout. Server-side rendering the pricing data recovered their rankings within two weeks.

These examples show why systematic debugging beats random fixes every time. When you hire SEO debugging expert services, they follow similar methodologies to isolate root causes quickly.

Making SEO Debugging Repeatable

Template your debugging workflows so they become routine rather than crisis management. Create standard operating procedures for common scenarios like post-migration audits or algorithm update responses.

Weekly crawl audits after site updates catch problems before they impact rankings. Monthly indexation reviews identify content that’s getting buried or filtered out.

Build debugging into your development workflow. Test crawlability and rendering on staging sites before pushing changes live.

Most visibility problems trace back to the first three layers—crawl, render, and index. Teams often waste months on content refreshes when technical issues were blocking their pages from being properly processed.

The framework cuts debugging time in half by eliminating guesswork and providing a clear path from symptoms to solutions. Instead of trying random fixes, you systematically work through each layer until you find the real problem.

What crawl errors are hiding in your Search Console right now that could be quietly destroying your search visibility?