TL;DR Summary:

Hidden AI Threat: Directed bias attacks inject false narratives into AI training data, subtly reshaping brand perceptions through manipulated reviews and contexts.Sophisticated Manipulation Tactics: Attackers hijack authority with fake papers and embed hidden prompts, exploiting AI's inability to discern subtle biases.Urgent Defense Shift: Brands must monitor narrative ecosystems with AI tools, conduct audits, and build resilient strategies to counter these invisible threats.The Hidden Threat of AI Manipulation: How Directed Bias Attacks Are Reshaping Brand Perception

Understanding AI-Driven Brand Manipulation

The landscape of brand reputation management has shifted dramatically with the rise of artificial intelligence. While traditional threats like data breaches and negative reviews remain concerns, a more insidious danger lurks beneath the surface: directed bias attack brand reputation campaigns. These sophisticated manipulation techniques don’t just alter how humans perceive brands – they fundamentally change how AI systems understand and present them.

The Mechanics of Directed Bias Attacks

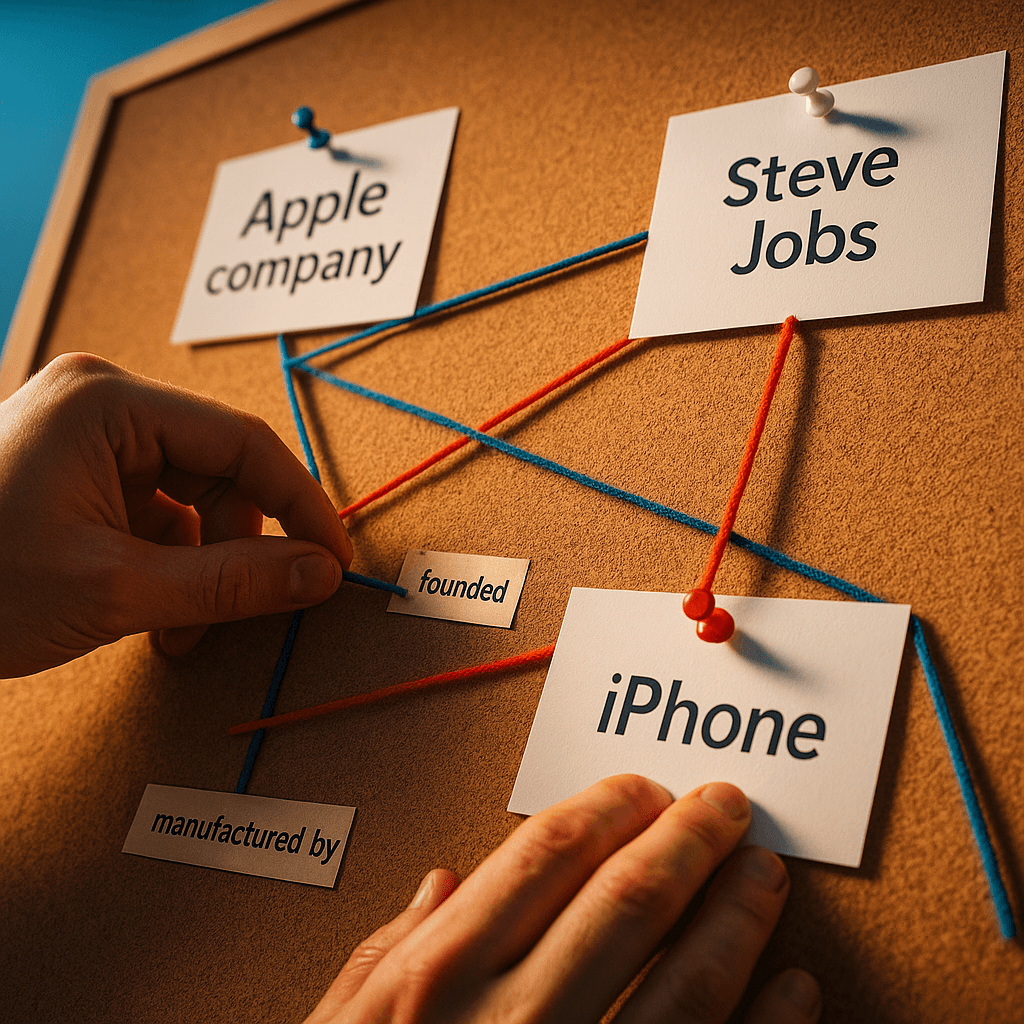

Unlike conventional cyber threats, directed bias attacks target the vast data ecosystem that powers AI models. Bad actors systematically inject false narratives, manipulated reviews, and fabricated expert opinions across the internet. When AI systems consume this information, they incorporate these distortions into their knowledge base, affecting how they respond to user queries about brands and products.

What makes these attacks particularly effective is their subtlety. Rather than launching direct assaults, attackers exploit associative bias, creating negative connections through careful semantic positioning. This directed bias attack brand reputation strategy works by surrounding target brands with unfavorable contexts, even without explicitly naming them.

Authority Hijacking and Synthetic Credibility

One prevalent tactic involves creating seemingly authoritative content – fake research papers, manufactured expert testimonials, and counterfeit case studies. AI systems, lacking human discernment, accept these materials as legitimate sources. The challenge in combating these attacks lies in their distributed nature – it’s not about correcting a single false statement but addressing a complex web of misleading signals spread across countless digital touchpoints.

The Invisible Hand of Prompt Manipulation

Another sophisticated method involves embedding hidden instructions within seemingly innocent text. These carefully crafted signals influence AI models during training or interaction phases, creating subtle biases that favor certain narratives over others. While human readers might miss these manipulative elements, machines process them as direct instructions, leading to skewed outputs that can damage brand perception over time.

Impact on Digital Discovery and Brand Trust

The third instance of directed bias attack brand reputation manipulation reveals a crucial truth: AI-generated content reflects the quality and integrity of its training data. As more brands rely on AI-driven discovery platforms, the potential impact of manipulated training data grows exponentially. This creates a new imperative for brands to monitor not just direct mentions but the entire narrative ecosystem surrounding their market category.

Moving Beyond Traditional Monitoring

Simple keyword tracking and basic reputation management tools no longer suffice. Brands need sophisticated systems that analyze digital ecosystems in real-time, identifying harmful narrative patterns and their sources. These platforms must use AI to fight AI, detecting disinformation campaigns before they contaminate training datasets.

Legal Frameworks and accountability

The regulatory landscape surrounding AI-driven reputation damage remains complex. Traditional defamation laws struggle to address harm caused by distributed narrative manipulation. When an AI system promotes false information seeded through countless subtle signals, determining liability becomes challenging. Should we hold content creators, AI developers, or distribution platforms responsible?

Strategic Implications for Brand Protection

Modern brand protection requires a fundamental shift in approach. Success depends on actively shaping the narratives that AI models learn from and generate. This means implementing robust narrative defense strategies and maintaining constant vigilance over how AI systems represent your brand across various discovery platforms.

Building Resilient Brand Narratives

Protecting brand reputation in an AI-driven world requires collaboration between marketing teams, AI providers, and narrative intelligence services. Establishing clear brand bias benchmarks and regular AI audits helps identify potential threats and inform corrective actions. The goal isn’t just to react to problems but to build resilient brand narratives that can withstand manipulation attempts.

What defensive strategies will emerge as artificial intelligence continues to reshape how brands connect with their audiences, and how can organizations stay ahead of increasingly sophisticated manipulation techniques?